I watch a lot of television. Once upon a time that might have been an embarrassing admission for an English professor to make—or any kind of professor, really, if your understanding of academic types is that we’re supposed to concern ourselves with purely intellectual matters, and your understanding of television is that it’s the pure antithesis of the Life Of The Mind.

That attitude has always reeked of snobbery, even before television gave us shows like The Wire and Breaking Bad. There have always been green shoots in the cultural wasteland of network television, but cultural wastelands can themselves be of academic interest. Since the rise of “prestige TV”—a terrible designation for a variety of reasons, but it seems to have stuck—and the fracturing of the televisual landscape from network to cable to streaming, there’s been an embarrassment of riches. Television that ranges from simply really good to actual narrative and visual art is pretty much de rigeur, and it’s a great deal of fun to argue what falls where on that spectrum.

So I thought I’d close out 2022 with an extremely personal and subjective rundown of what I enjoyed over the year. Listed here are just the shows that initially aired in 2022; not shown or discussed are shows from previous years I only now got around to watching because they only just now appeared on one of the streaming services I subscribe to (we watched a lot of Bob’s Burgers and What We Do in the Shadows, for example, but nothing of these from 2022). I’m also only talking about shows of which I watched an entire season, which seems only fair. I lost interest in, for example, She-Hulk and Outer Range after one or two episodes, but it’s entirely possible they got better as they went.

So we all understand the pool of options here, the seasons of television I saw in their entirety in 2022 are:

Andor

Bad Sisters

Better Call Saul, S6 (first half)

Derry Girls, S3

The Dropout

The Expanse, S6

Gaslit

House of the Dragon

Kids in the Hall (reboot)

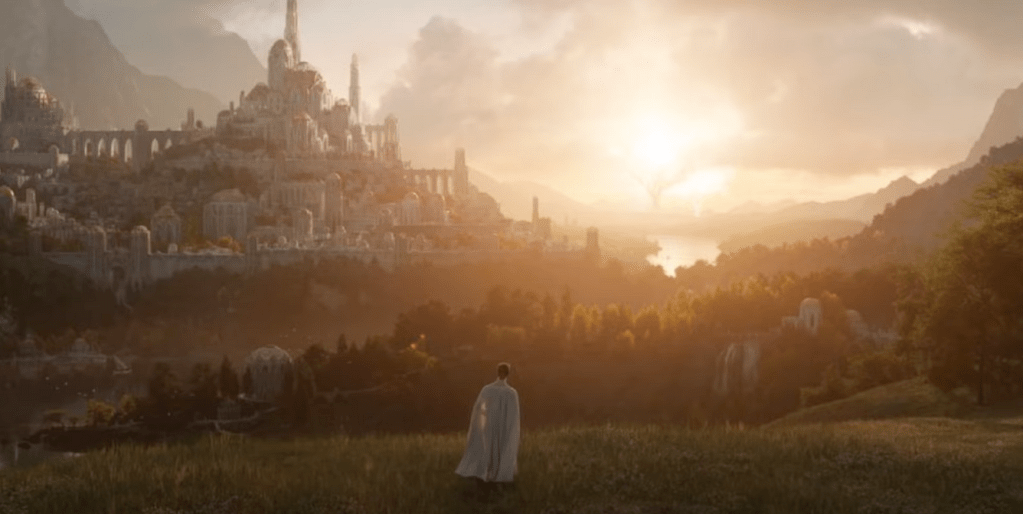

The Lord of the Rings: Rings of Power

Obi-Wan Kenobi

Our Flag Means Death

Reacher

SAS: Rogue Heroes

The Sandman

Severance

Slow Horses, S1 & S2

Star Trek: Lower Decks, S3

Star Trek: Strange New Worlds

Stranger Things, S4

We Own This City

Wednesday

Yellowjackets

MY FAVOURITE SHOW: Andor

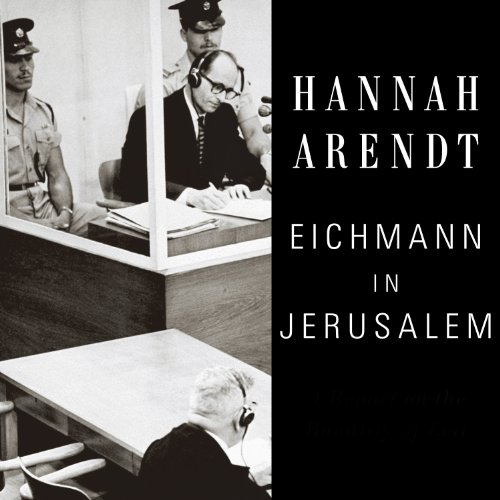

I didn’t think Star Wars could surprise me anymore, and the downward trajectory described by The Mandalorian (really good), The Book of Boba Fett (meh), and Obi-Wan Kenobi (see below) didn’t fill me with hope when I saw the trailer for Andor. But … holy crap. There’s a temptation to say it’s nothing like Star Wars, except that aesthetically and texturally it’s the most Star Wars thing I think I’ve ever seen. And it give substance to all the other iterations: here is the inner workings of the polyglot galaxy, seen from the lower classes, the workers, the functionaries, the mid-level bureaucrats; in the depiction of the Empire’s intelligence officers and its carceral system we see the banality of evil. The fascistic aesthetic George Lucas gave the Imperials was largely that—aesthetic, and the evil was the Dark Lord variety. In Andor we see how all that is sustained, and, perhaps more importantly, we see how resistance is fomented and sustained.

.

RUNNERS-UP: Bad Sisters, Our Flag Means Death, Severance

MY FAVOURITE PERFORMANCES

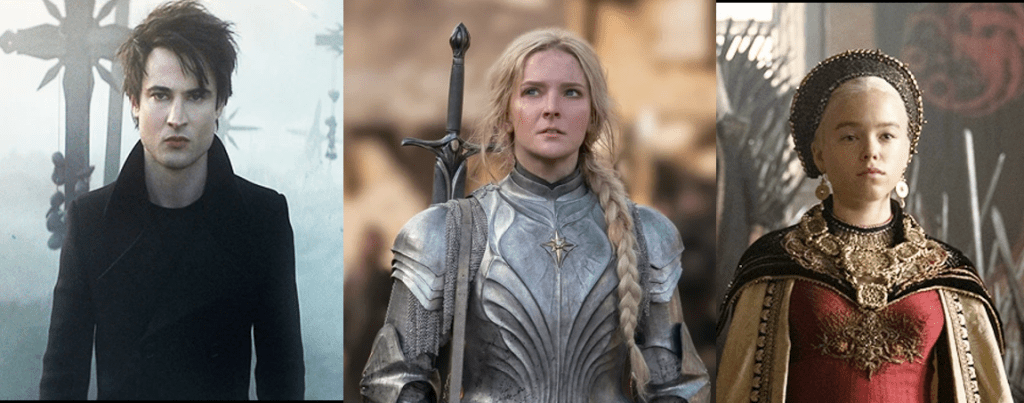

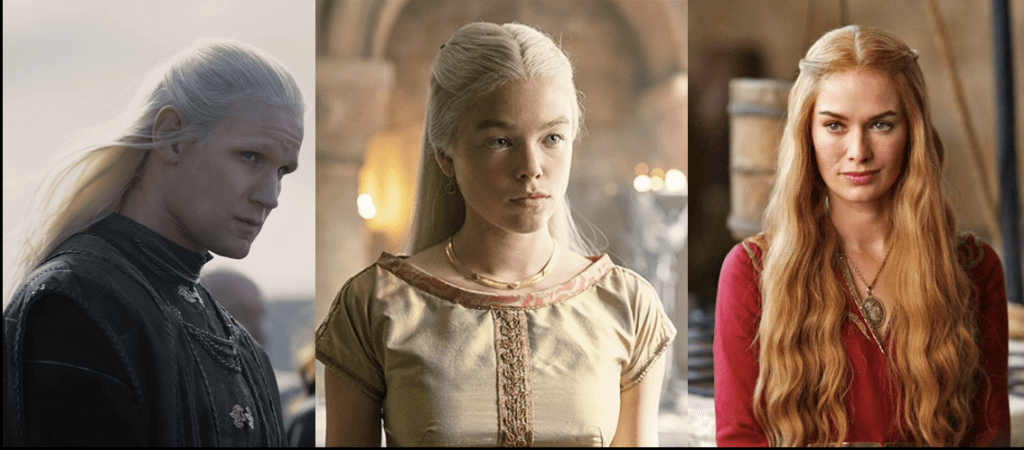

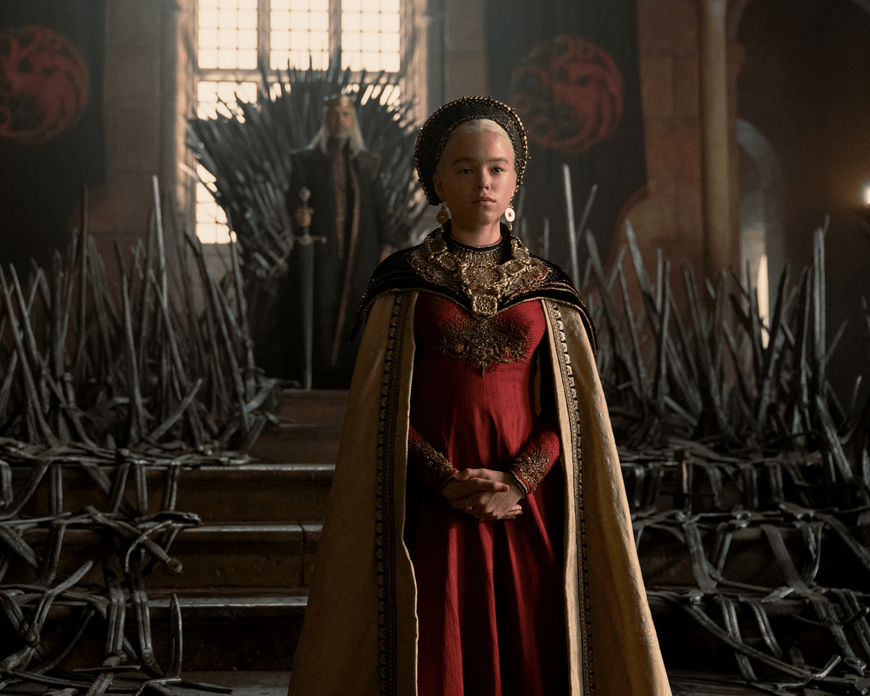

Milly Alcock as young Rhaenyra Tagaryen in House of the Dragon

There were a number of really strong performances on this show, especially Paddy Consadine as the weak, ineffectual, and ailing King Viserys—but it was Alcock as the young Rhaenyra that was most impressive. If the character was to be effective, the younger version needed to stick the performance deep; Alcock was wonderfully subtle in the role, portraying an adolescent with enough gravitas to hold her own against Matt Smith’s gleeful (Daemonic?) sociopathy and convince the audience that, yes, she was a worthy heir to the Iron Throne.

Gwendoline Christie as Lucifer Morningstar in The Sandman AND as Principal Larissa Weems in Wednesday

Like most devotees of the divine Ms. Christie, I first encountered her in the role of Brienne of Tarth in Game of Thrones and was smitten. Her casting as Lucifer in The Sandman was a master stroke, one of the few substantive deviations from Gaiman’s original comic (in which Lucifer was basically a diabolical David Bowie); Christie imbued the Prince of Hell with a cold and menacing hauteur that made it clear they were one of the few entities Dream should fear. She brought a similar icy precision to Weems in Wednesday, but managed at the same time to communicate the banked fires of anger at old wounds inflicted by Morticia that always left it in question whether she was ally or antagonist.

.

Rhys Darby and Taika Waititi as Stede Bonnet and Edward “Blackbeard” Teach in Our Flag Means Death.

I mean … you can’t have one without the other on this show. And they’re both so good. The entire emotional armature of this series is based on these two seemingly antithetical characters coming together in friendship and then in love; for all the madcap absurdity of the show’s premise, it’s their relationship that makes OFMD more than just a comic confection.

Anson Mount as Captain Christopher Pike in Star Trek: Strange New Worlds

I have more to say about ST: SNW below, but suffice to say Anson Mount as Pike holds his own with every other Starfleet captain who has yet graced the screen. He was what made Discovery watchable for a time, so I was delighted when the powers that be saw the wisdom in doing yet another Star Trek with Mount in the captain’s chair.

Gary Oldman as Jackson Lamb in Slow Horses

Oldman is so very obviously having a ball in this role that he’d be worth watching even if the show wasn’t as excellent as it is. Jackson Lamb is a crapulescent, drunk, filthy, and frighteningly competent cold warrior spending his waning years in Brexit-era Britain’s MI5 lording it over a collection of misfits and losers who, rather than be fired, are exiled to “Slough House.” There they are tormented by the profane Lamb, while somehow falling ass-backwards into actual intelligence work.

.

Jenna Ortega as Wednesday Addams in Wednesday

It’s a daunting task for anyone to take on a role that has been so indelibly defined by another actor, as Wednesday Addams was by Christina Ricci. But Ortega’s performance is at once so consonant with what we expect of the character, while also being entirely her own, that she is truly astonishing to watch. The only comparable example I can think of—an actor owning an iconic role while being profoundly respectful of the work done by its principal precursor—is Mads Mikkelsen stepping into Anthony Hopkins’ shoes to play Hannibal Lecter. And that is about the highest compliment I can pay, not least because young Ms. Addams as portrayed by Ortega seems like she might be a match for Dr. Lecter. I mean, if Nevermore Academy ever needs a psychology teacher—or a head chef—that would be a crossover for the ages.

Stellan Skarsgard as Luthen Rael in Andor.

He was amazing all the way through as the rebel spymaster, but really, it was this speech that articulated the ethical complexity of Andor and in so doing put him over the top.

.

Runners-Up: Britt Lower as Helly in Severance; Connor Swindells as Arthur Stirling in SAS: Rogue Heroes; Chistina Ricci as Misty in Yellowjackets; Siobhán McSweeney as Sister Michael in Derry Girls; Kirby Howell-Baptiste as Death in The Sandman; Amanda Seyfreid as Elizabeth Holmes in The Dropout

FAVOURITE ENSEMBLE: Yellowjackets

Tough category! Severance is a competitive choice here—none of its individual actors make the list of my favourite performances because they’re all so good, but are all so good in concert. Stranger Things suggests itself, as does Bad Sisters (somewhat more persuasively). Derry Girls is probably my sentimental favourite here, not least because its third season was its last. But just in terms of group dynamics, especially in the way it balanced the older versions of the characters against the younger, Yellowjackets takes the ribbon. (See below for more).

.

BIGGEST RELIEF: Kids in the Hall AND The Sandman

This one is a tie between Kids in the Hall and The Sandman because they accomplished the same thing: they didn’t suck. The Kids are older, greyer, paunchier, but they’ve still got game—perhaps not now the strength they were in old days when they were (and remain) the best sketch show ever made, but they’re still weird, irreverent, and hilarious. It was a relief that, whatever self-reflexive gestures they make to now being an Amazon property, this isn’t just a late-career grab for cash and/or relevance.

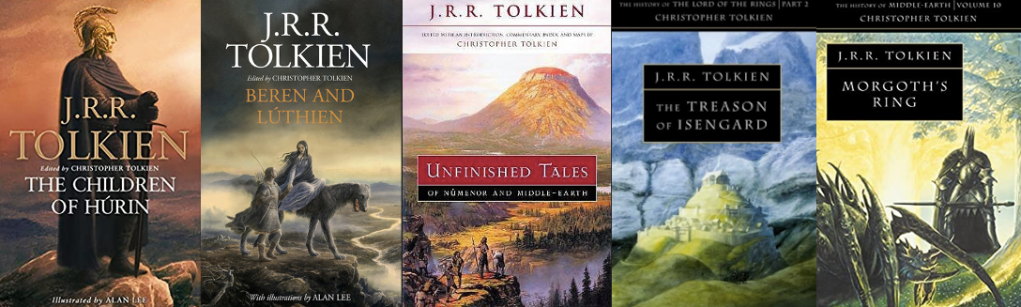

I read Neil Gaiman’s comic The Sandman obsessively when it first came out in the late 80s-early 90s. It was, indeed, the only comic that ever captured my attention. It was smart, it was literary, and the hero looked like Robert Smith of The Cure, all elements that appealed to my pretentious and broody late teen self. In the thirty years since there have been countless rumours about filmic adaptations, all of which mercifully came to naught. Three decades was worth the wait.

BIGGEST DISAPPOINTMENT: Obi-Wan Kenobi

No contest. Not even close. Second place is so far back I can’t see it. This show was terribly, bafflingly bad. The best thing can be said about it is that it was so bad it made me wait to watch Andor for several weeks, and then I could binge the first several episodes. It was as if the showrunners felt the need to be faithful to Lucas’ clunky writing and awkward direction on display in the prequels. What a criminal waste of Ewan McGregor’s talents. It made me hate a show that had Kumail Nanjiani in it, and I didn’t think that was possible.

MOST HEARTBREAKING CHARACTER DEATH: Eddie Munson, Stranger Things

Oh Eddie, we hardly knew ye.

FILLING THAT LOST-SHAPED HOLE: Yellowjackets

I mean, obviously. Plane crash in a remote location? Check. Gradually vanishing hopes of rescue? Check. Flash forwards to troubled lives back in civilization? Check. Character who thrives as a castaway? Check. Weird/uncanny/possibly supernatural stuff going on? Check, check, and check. And Yellowjackets has a lot of elements Lost didn’t: (1) cannibalism, or at least the strong suggestion of cannibalism; (2) a kickass 90s soundtrack; (3) a sense that the writers have a plan; (4) a preponderance of girls and women in the main roles; (5) a nuanced queer dimension to the story.

MOST FABULOUS OUTFITS: Chrisjen Avasarala, The Expanse

Yes, Wednesday Addams made a late-in-the-game play for this category—especially with her dress at the dance—but the foul-mouthed diplomat-turned-politico Chrisjen Avasarala, played with magnificent relish by the beautiful Shohreh Aghdashloo, was always clad in dresses that must have been a costumer’s dream to create.

.

GUILTIEST PLEASURE: Reacher

I will confess I’ve always found the Tom Cruise movie Jack Reacher eminently watchable, though it is intensely hated by fans of the Lee Child novels. The Jack Reacher of the fiction is tall and muscled like an ox, while Cruise is manifestly not. Hence, the series is a corrective: featuring a title character played by someone who is six-foot-massive with Schwartzeneggerian musculature (‘fists like Christmas hams” is the line always quoted from the novels) matched with an intellect so perceptive and shrewd it puts to shame the grey matter of such girly-men as Holmes and Poirot. The show’s narrative framework is simple and satisfying: Reacher uses his superior intellect to identify the bad guys, then employs his Christmas-ham fists to beat the almighty shit out of them. Rinse and repeat until they’re all dead or unconscious, mystery solved, catch the first bus out of town. Season two awaits.

JOKE DENSITY: Derry Girls

Really, this was no contest. The closest second place is Our Flag Means Death, which does feature some sustained laugh-out-loud sequences. There is also the scene in Bad Sisters in which the sisters look on in shock and horror as their loathed brother-in-law, having been roofied for reasons I won’t spoil, falls pantsless into the marina harbour. But nothing can really match the asphyxiation-level laughter evoked by Derry Girls. And if you’re skeptical, I give you a brilliant cameo from an Irish actor with a particular set of skills.

.

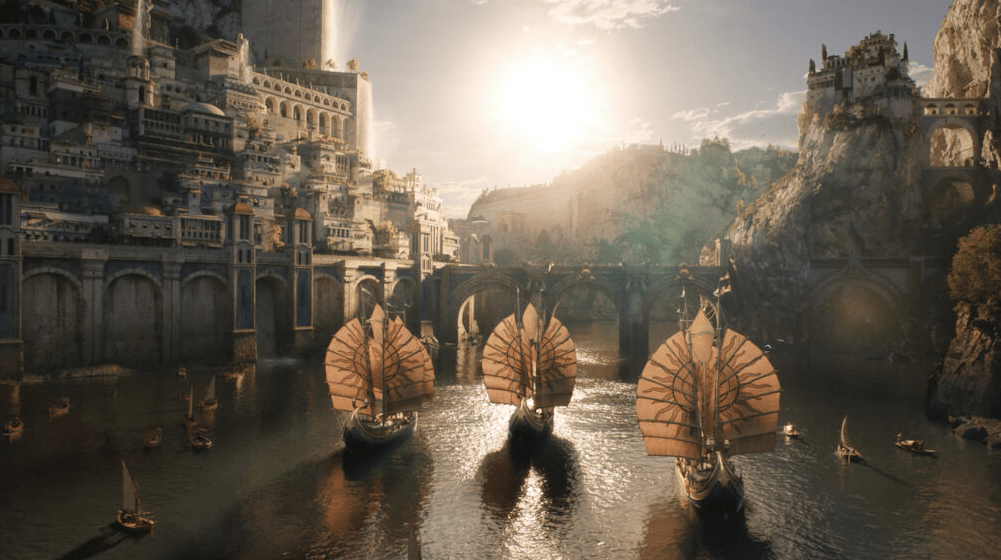

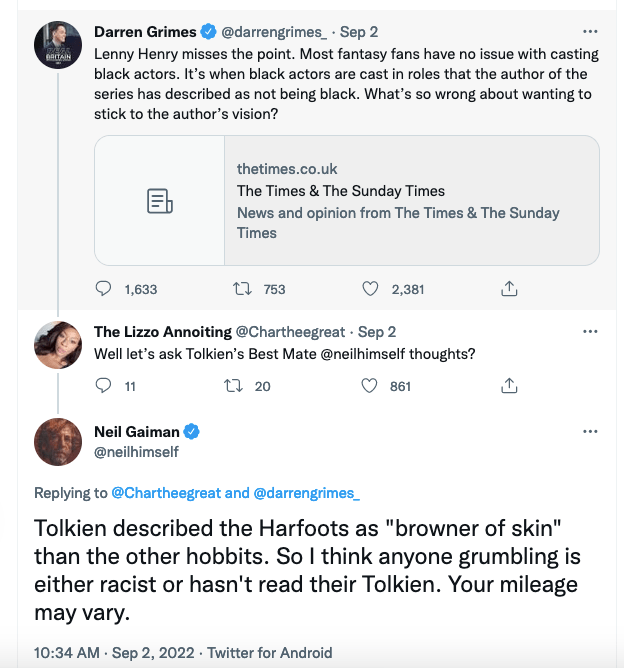

BEST SMOULDER: Arondir and Bronwyn in The Rings of Power

Peter Jackson gave us an object lesson in how not to do inter-legendarium romance in The Hobbit with Kili the dwarf (Aidan Turner) and Tauriel the elf (Evangeline Lily). Rings of Power does it right: wood-elf Arondir (Ismael Cruz Cordova) harbours a love for the human healer Bronwyn (Nazanin Bondiadi), who obviously reciprocates his feelings—but because of the tensions existing between the humans of her village—on probation for their earlier generations having served Sauron—and the elves (who act, essentially, as their probation officers), elf-human love is frowned upon by both camps. Also, there’s the fact that Arondir is so repressed and straitlaced that he might as well be a Vulcan (got the ears for it, anyway). But it’s because they play it in such a restrained way—and also because both of them are, as Derek Zoolander would say, really really ridiculously good looking—that I kept waiting for the air between them to ignite when they looked at each other.

.

.

BEST ADAPTATION: Slow Horses

In terms of pure fidelity to the text, Slow Horses is basically a beat-by-beat realization of the novel on which it’s based. I know this because after having watched the first season I picked up the novel Slow Horses and read it … and it’s a testament to Mick Heron’s writing that, even though that fidelity meant there were no surprises, I still tore through it in a few days. I then went out and got the second novel in the series, and then the third, and so on until I’d read all eight extant Slough House novels over the course of the summer. I should add that this show is the best adaptation even with The Expanse as a contender.

BEST COMFORT FOOD: Star Trek: Strange New Worlds

This is another competitive category, with Derry Girls and Star Trek: Lower Decks putting in strong showings. But Strange New Worlds was very much like a return to form: Star Trek as it used to be done, and an object lesson in the principle that you can do a smart TV show engaging with substantive topics (trauma, identity, bigotry, and all the usual ethical quandaries Star Trek loves to get into) without making things both literally and figuratively dark. After Discovery and Picard, it’s nice seeing a starship bridge that doesn’t look like it’s lit for a séance.

MOST DISCONCERTING CASTING: various characters, We Own This City

To be fair, the casting in We Own This City is only going to weird out people who obsessively watched The Wire, but then I have to imagine that would account for a large portion of its viewership. I’ve always delighted in Wire-spotting on other shows, such as when Ellis Carver (Seth Gilliam) shows up on The Walking Dead or Tommy Carcetti (Aiden Gillen) continues his political turn to the dark side on Game of Thrones, but it feels like a head fake when a Wire actor shows up in the almost identical context of We Own This City playing a dramatically difference character. To be fair, the only really jarring example is that of Jamie Hector, who played the terrifying and coldly sociopathic drug boss Marlo Stanfield on The Wire; he shows up in We Own This City as Sean Suiter, a thoughtful, conscientious homicide cop troubled by his past experience with the corrupt Gun Trace Task Force. I think my favourite moment in this vein, however, is when Suiter is helped by a young Black patrol cop who looked vaguely familiar. My partner and I, recognizing him at the same moment, burst out in unison, “Holy shit, that’s Dukie!” (fans of The Wire will get me).

.

SADLY SAYING GOODBYE: Derry Girls and The Expanse

Two of my favourite shows had their series finales this past year, and they really couldn’t be more different. Derry Girls is a hilarious but heartfelt show about five high school friends (four of them girls, one an honourary girl) living typical adolescent lives in the atypical setting of Derry, Northern Ireland in the final years of sectarian strife. The show ended its third and final season with the ratifying of the Easter Sunday Accords in 1998, a momentous event coinciding with the girls graduating. In contrast, The Expanse is quite possibly one of the best science fiction series ever, and I don’t make that claim lightly. This was a show that started strong in its first season, and just got better on all fronts with each successive season: better writing, better visual effects, and just better in terms of how the characters became family, both among themselves and with the audience.